Our house has speakers in the ceiling in almost every room. This is not something I’ve had before, and was initially skeptical about usefulness and fidelity. However, I’ve actually been enjoying having the background music while working be spread further than just my office. When I leave the room to get coffee or food, it’s nice to have the same music playing in the kitchen and beyond.

Background

I think that very high-end systems have all the speakers in the house wired back to a central location, where a massive multichannel audio system powers them, providing independent audio routing for rooms and other neat things like that. Our speakers, on the other hand, are mostly wired to a feed point in each room. The largest “zone” consists of the living room, stairway, bedroom hallway, my office, and the back deck, all of which are wired to the media console in the living room. The master bedroom and bathroom speakers are wired to a single place in the master bedroom, a pattern repeated in the other bedrooms. The large zone covers a lot of the space I care about during the day, but there are times (especially on the weekends) when we’d like for the other zones to be fed with the same stream.

One solution to this problem would be to reroute the speaker wires from the remote zones to the feed point of the largest main floor zone. This is hard to do because of a few exterior walls, but also would require at least a multichannel amplifier to be effective. We also want to be able to do things like pipe the bedroom TV audio to the bedroom speakers at times, and sending that all the way down to the living room just to be amplified and sent back up is kinda silly.

During my workdays, I have an MPD instance that plays my entire music collection on shuffle, which provides me a custom radio station with no commercials. A solution to the multiple zone problem above should mean that I can hear that stream anywhere in the house. A first thought was to just use icecast and several clients to play that stream in each zone. The downside of that is that the clients would be very out of sync, providing reverb or echo effects at the boundaries of two zones.

Solution

Turns out, PulseAudio has solved this problem for us, and in an amazingly awesome way. Assuming you have the bandwidth, PulseAudio can send an uncompressed stream via RTP from one node to another. It also has the ability to use multicast RTP and send one stream to … anyone that wants to listen.

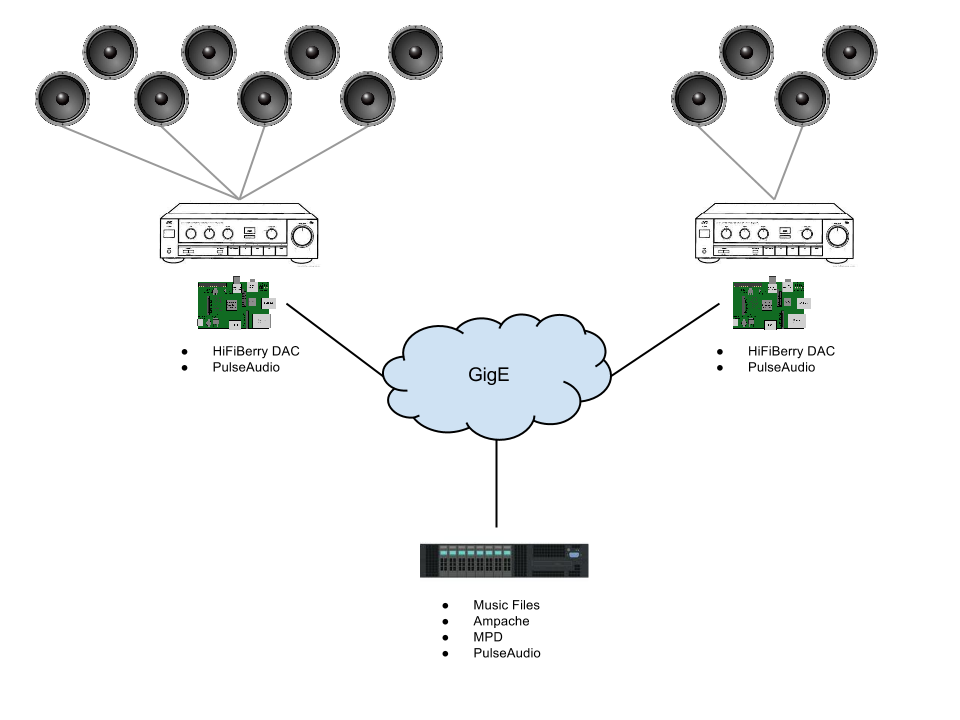

Keeping to just two rooms for the sake of discussion, below is a diagram of what I’ve got now:

At each feed point, I have a Raspberry Pi, with the excellent HiFiBerry DAC attached. Each of these just needs a standard Raspbian install, with PulseAudio. This provides a quiet, low-power, solid-state source to feed the amplifier and, thus, the speakers at each location. In the server room, I already have a machine with lots of storage that houses the music collection, and runs Ampache to provide multi-catalog management of the MPD player. By running MPD on that machine, along with PulseAudio configured for multicast RTP, this machine effectively becomes the “radio station” for the house.

First, the configuration of the server machine. PulseAudio is probably already installed, so all you need to do is enable the null sink and RTP sender by putting this in /etc/pulse/default.pa:

load-module module-native-protocol-unix load-module module-suspend-on-idle timeout=1 load-module module-null-sink sink_name=rtp load-module module-rtp-send source=rtp.monitor rate=48000 channels=2 format=s16be

Then configure MPD to use PulseAudio by putting this in /etc/mpd.conf:

audio_output {

type "pulse"

name "My Pulse Output"

}

At this point, you should be able to start MPD, add some music and start it playing.

Next, get a Raspberry Pi booted to a fresh Raspbian install. If your DAC needs special configuration, do that now. Otherwise, the (awful) integrated audio should work for testing. In /etc/pulse/daemon.conf, set the following things:

; Avoid PulseAudio auto-quitting when idle exit-idle-time = -1 ; Don't use floating-point ops to resample resample-method = trivial ; Default to 48kHz sampling rate default-sample-rate 48000

Next, we configure PulseAudio to listen to multicast RTP and play whatever it finds. In /etc/pulse/default.pa:

load-module module-rtp-recv

Now you should be able to start the daemon and get audio. For debugging, in the foreground:

pulseaudio -v

Within a few seconds, you should see the daemon discover the stream, latch on, and start playing it.

Impressions

At first, I was highly skeptical that streaming uncompressed audio over the network was going to result in satisfactory performance. Obviously, it’s necessary for achieving any sort of realtime playback, but I expected to have issues keeping up with the stream, even on GigE just from a congestion point of view. I’m happy to say that for the most part, there really aren’t issues with this, and the audio quality is quite good. It won’t satisfy the audiophile, and I’d never use it for dedicated music listening with high quality speakers or headphones. However, for background audio while I’m working and general music in the house, it’s quite good.

I didn’t know what to expect with PulseAudio’s latency-matching attempts to create a seamless echo-free transition between zones. After testing it for several days, I can say that I am flat-out amazed. For all intents and purposes, when standing between two zones being fed by two different machines from the “radio station” stream, it’s basically impossible to tell that they’re not tied together on the analog side. I haven’t gone so far as to create a single tone audio file and try to detect beats between two adjacent systems, so I’m sure doing that would make it easier to tell that they’re not perfectly synchronized. However, for casual music listening, it’s very good.

Next Steps

After seeing how well this works for the house’s “radio station” I have some other thoughts. Each of the Raspberry Pi players is located near a TV. If each of those had a capture device, then it would be possible to stream TV audio from either location to the rest of the house for the “watching the news, but doing other stuff” sort of use-case. I figure that in order to make this really useful, I’ll need a web interface that allows me to enable or disable various streams, and control which stream any given player will “subscribe” to. That would let us patch any audio stream to any output easily and dynamically.

Die you also try it with the onboard audio card? In nmy case the stream worked for a few seconds (or sometimes not at all) and stopped afterwards. The logs said that the sample rate difference was too high (the pulaeaudio Server logs showed strange values… .

.

Yes, the onboard audio should work as well.

The behavior you describe happened to me if (a) I didn’t have the sampling rate the same on the sender and receiver (either 44100 or 48000) or (b) if the resample method was still set to the default. The default uses floating-point math to resample, and the pi can’t keep up since it has to emulate floating-point operations.

Thanks for the great tutorial. I know a bit about Linux, but I was not familiar with MPD or Pulseaudio until now. Thanks so much!

I am having a bit of trouble getting the Rasberry Pi to playback the stream. Here’s what seems to be working:

1) Ampache is properly configured to invoke MPD for playback as I can see the correct entries in the MPD log and, when I enable ALSA audio output in the mpd.conf file, I hear playback through the server’s soundcard.

2) When I run pulseaudio -v on the server as well as the Pi client, i can see the server start the RTP stream and, a few seconds later, the Pi client pick it up and attempt to play whatever it finds.

3) My Rasberry Pi’s Hifiberry is configured correctly as I can play a single MP3 using mplayer and I can hear audio playback through Hifiberry.

Best I can figure, either MPD isn’t sending the audio to Pulse on the server side or Pulse is not sending the audio to the soundcard on the client side. The entry I used to send MPD audio to Pulse was copied from the example you gave:

audio_output {

type "pulse"

name "My Pulse Output"

}

I don’t mean to bother, but I was wondering if you could help me determine where the problem might be. Any suggestions?